Training Memory-Intensive Deep Learning Models with PyTorch's Distributed Data Parallel | Naga's Blog

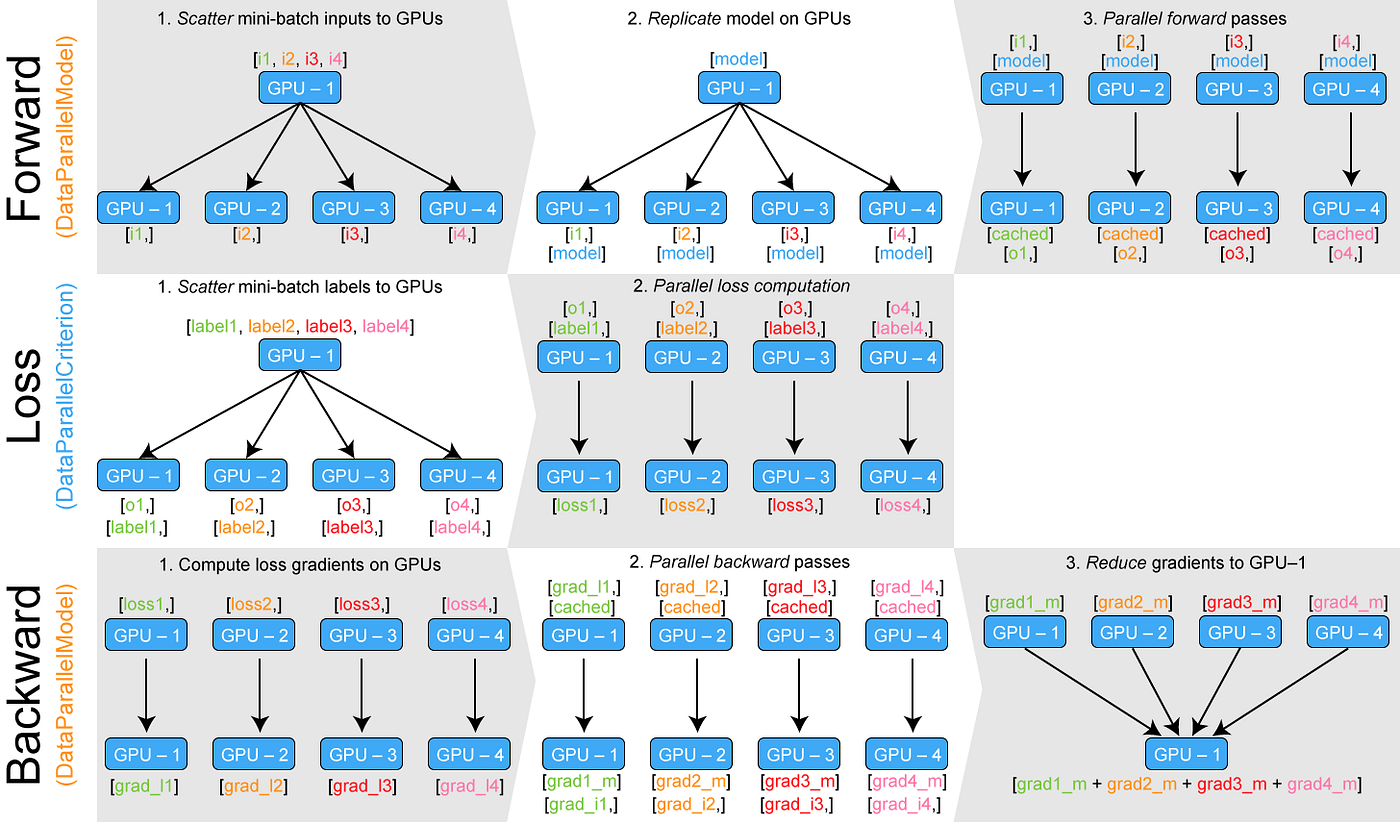

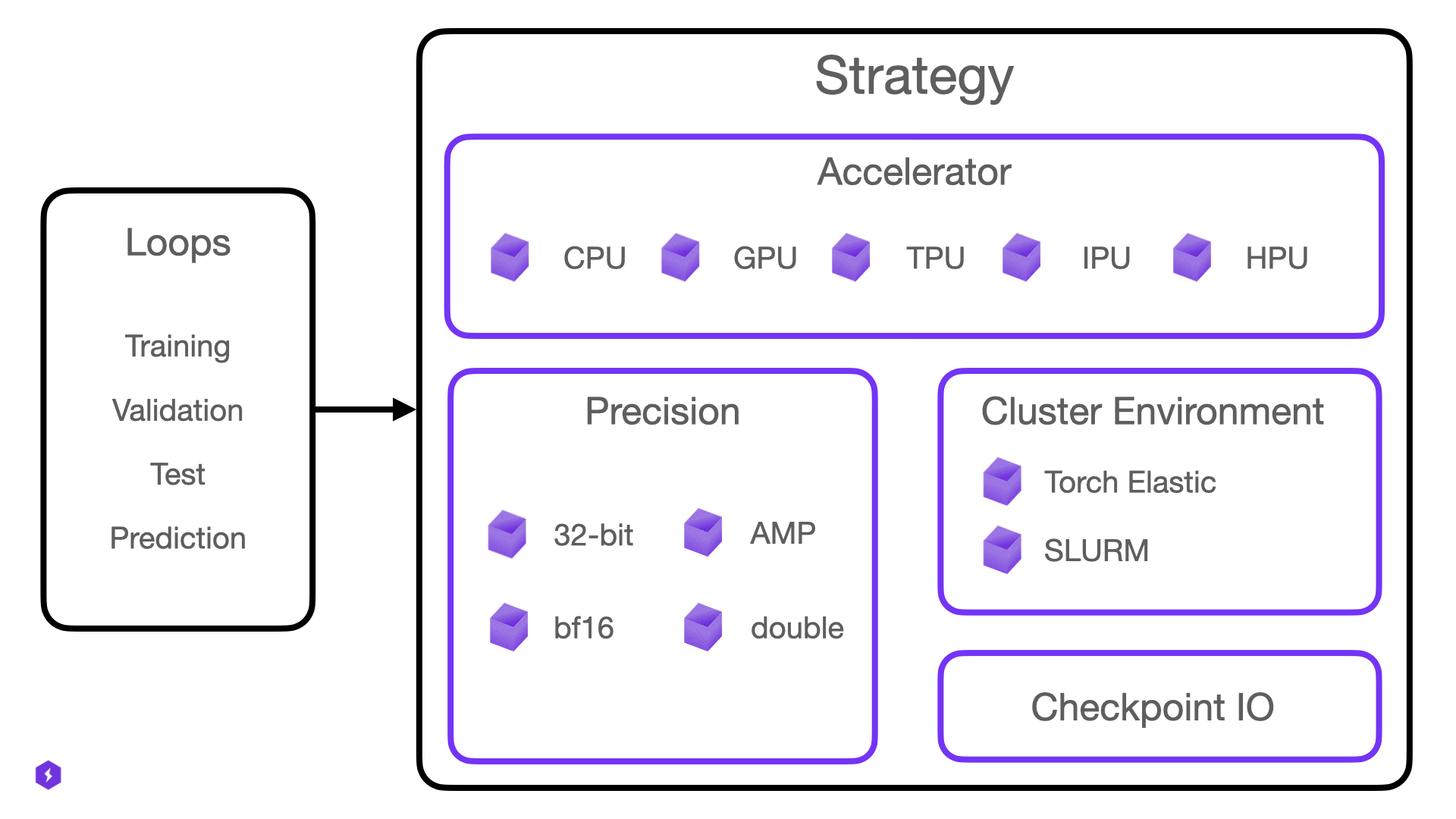

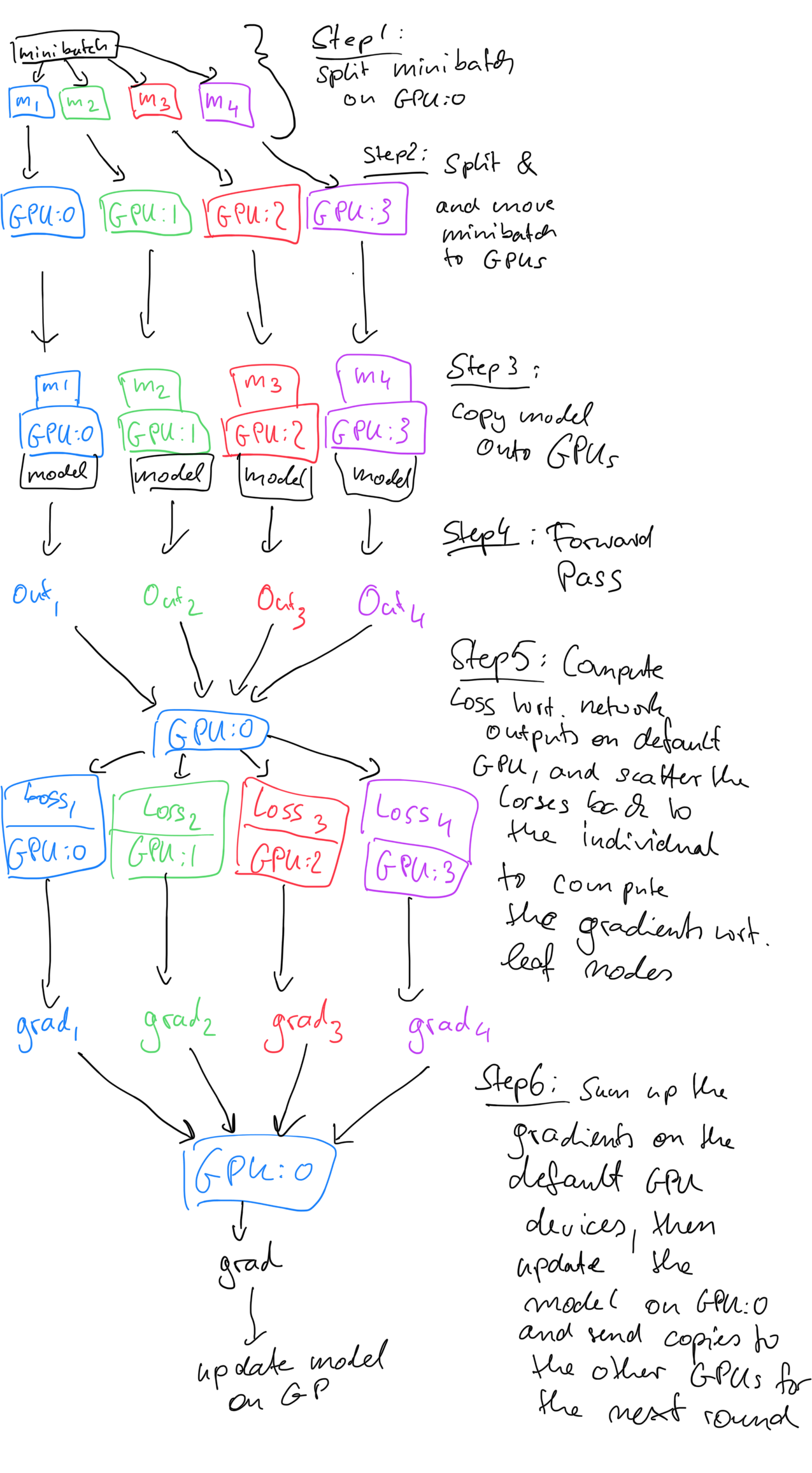

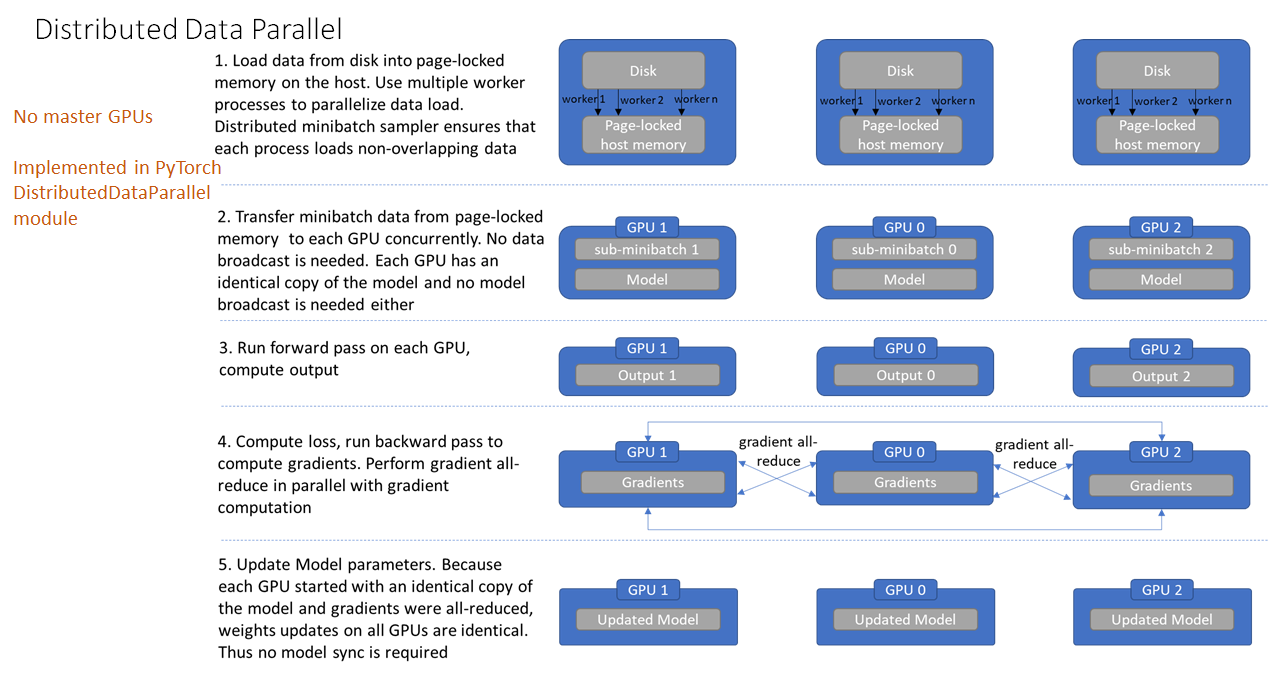

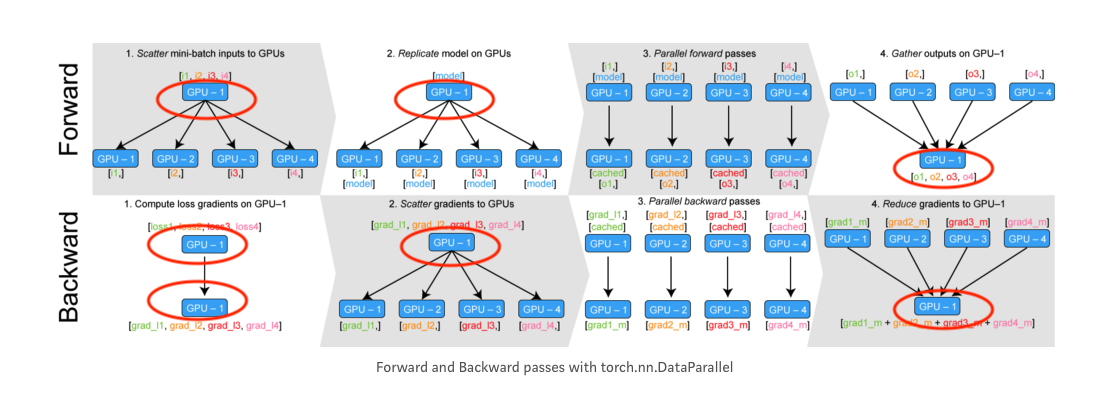

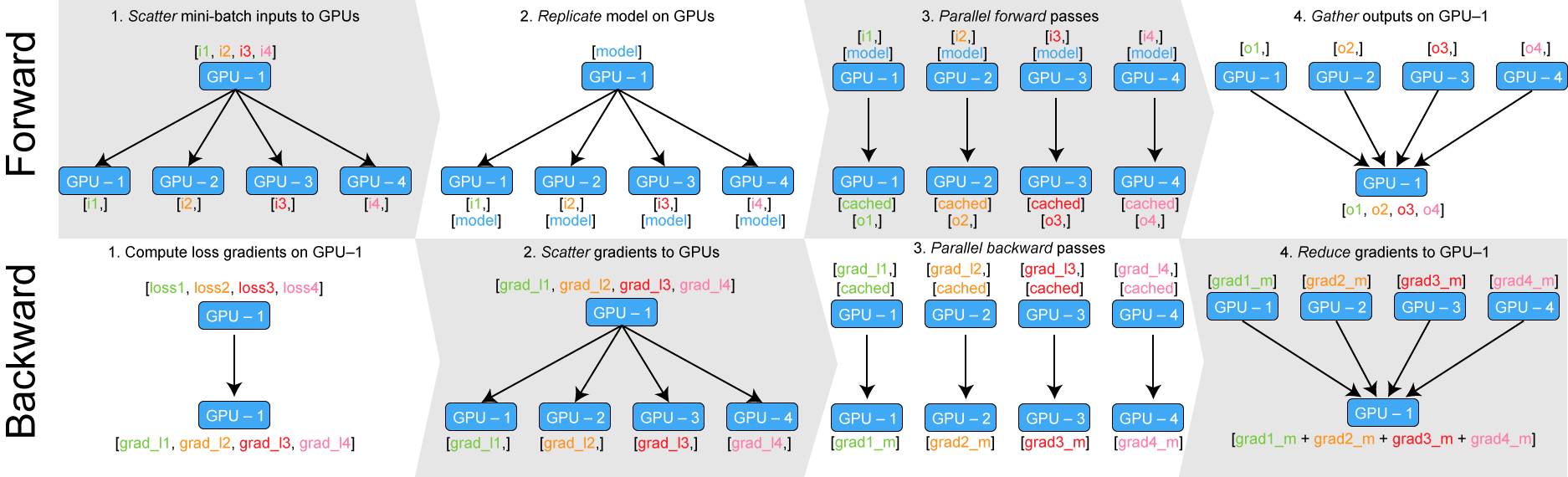

How distributed training works in Pytorch: distributed data-parallel and mixed-precision training | AI Summer

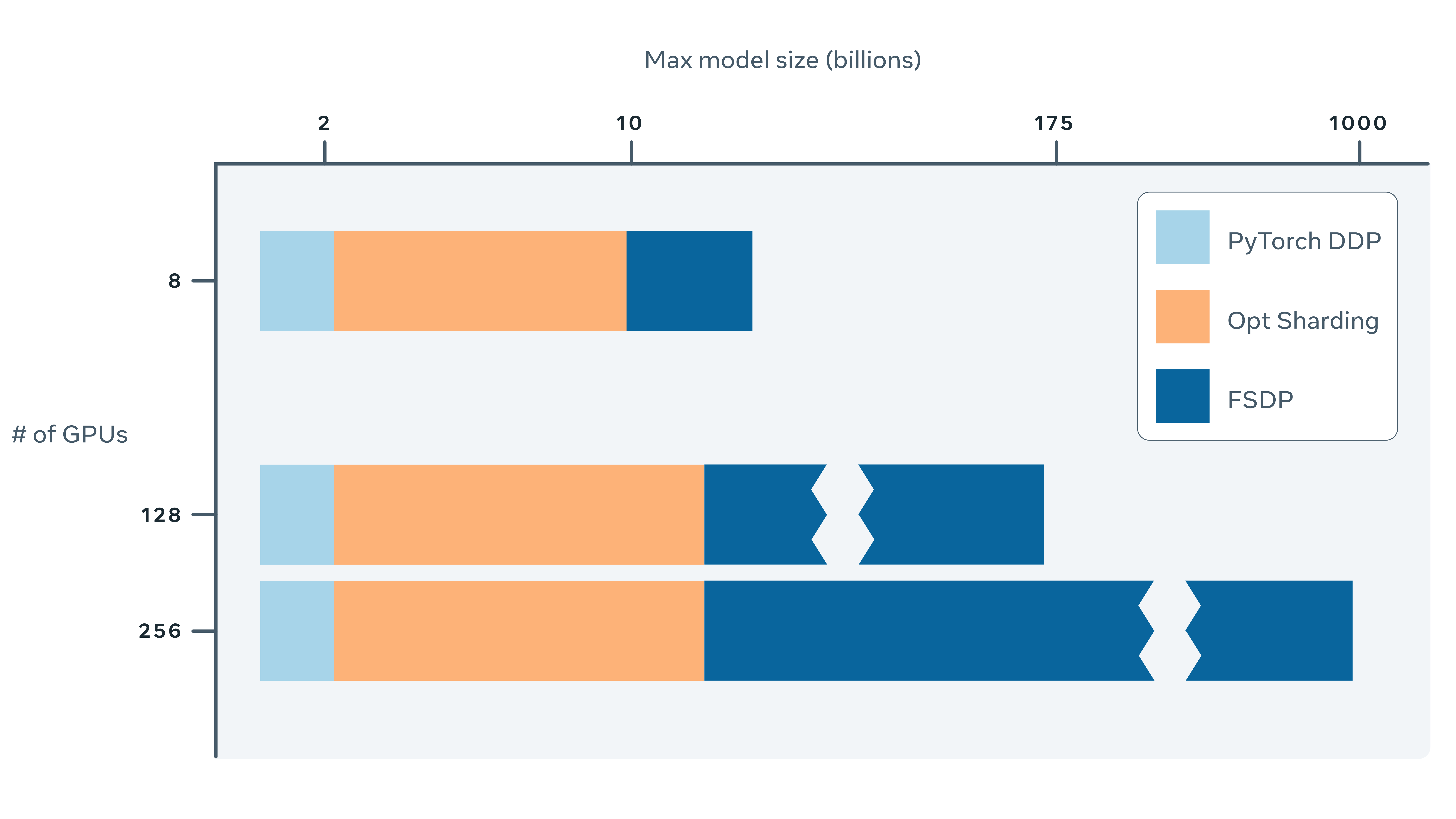

Training Memory-Intensive Deep Learning Models with PyTorch's Distributed Data Parallel | Naga's Blog

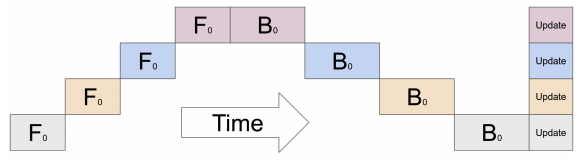

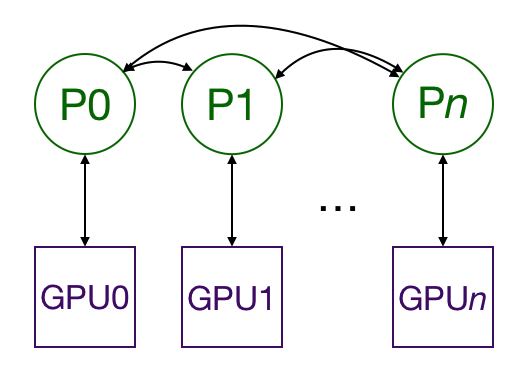

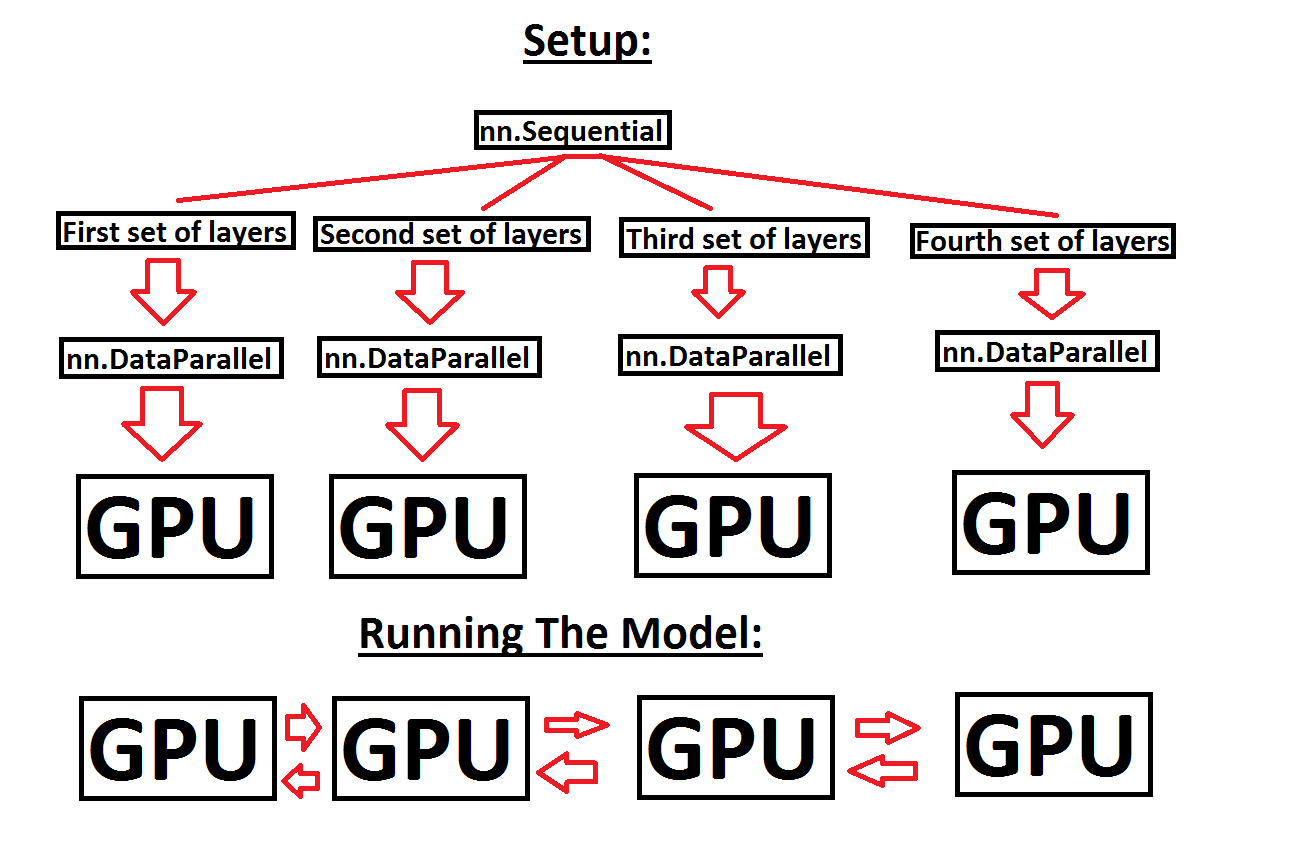

Help with running a sequential model across multiple GPUs, in order to make use of more GPU memory - PyTorch Forums