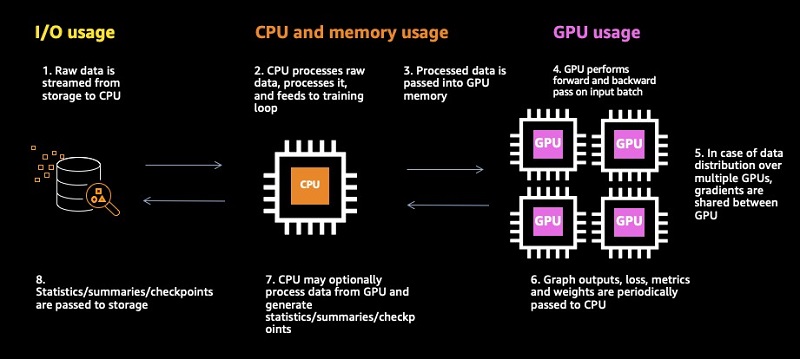

Identifying training bottlenecks and system resource under-utilization with Amazon SageMaker Debugger | AWS Machine Learning Blog

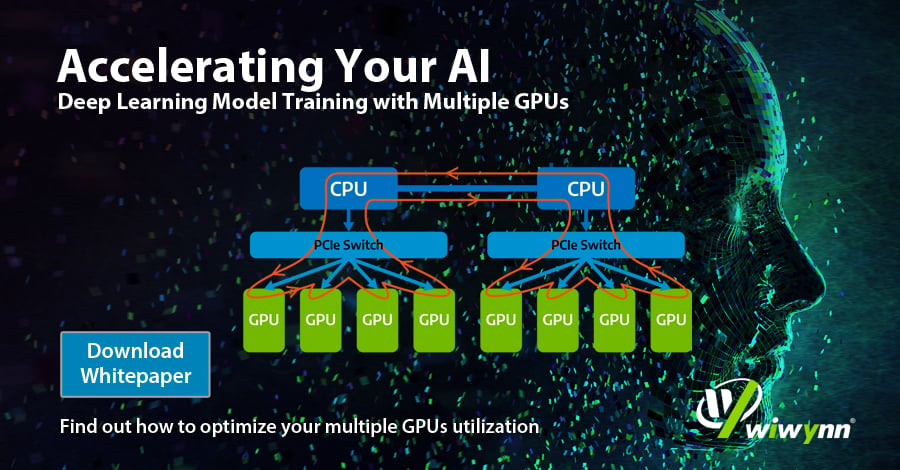

Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

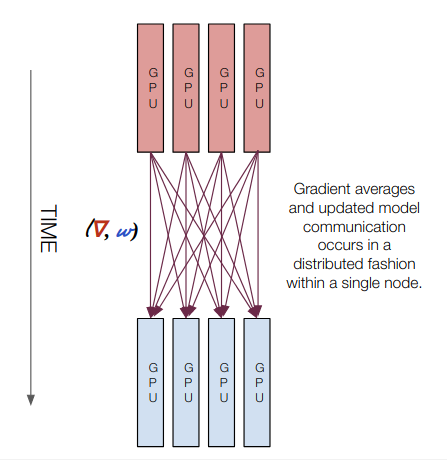

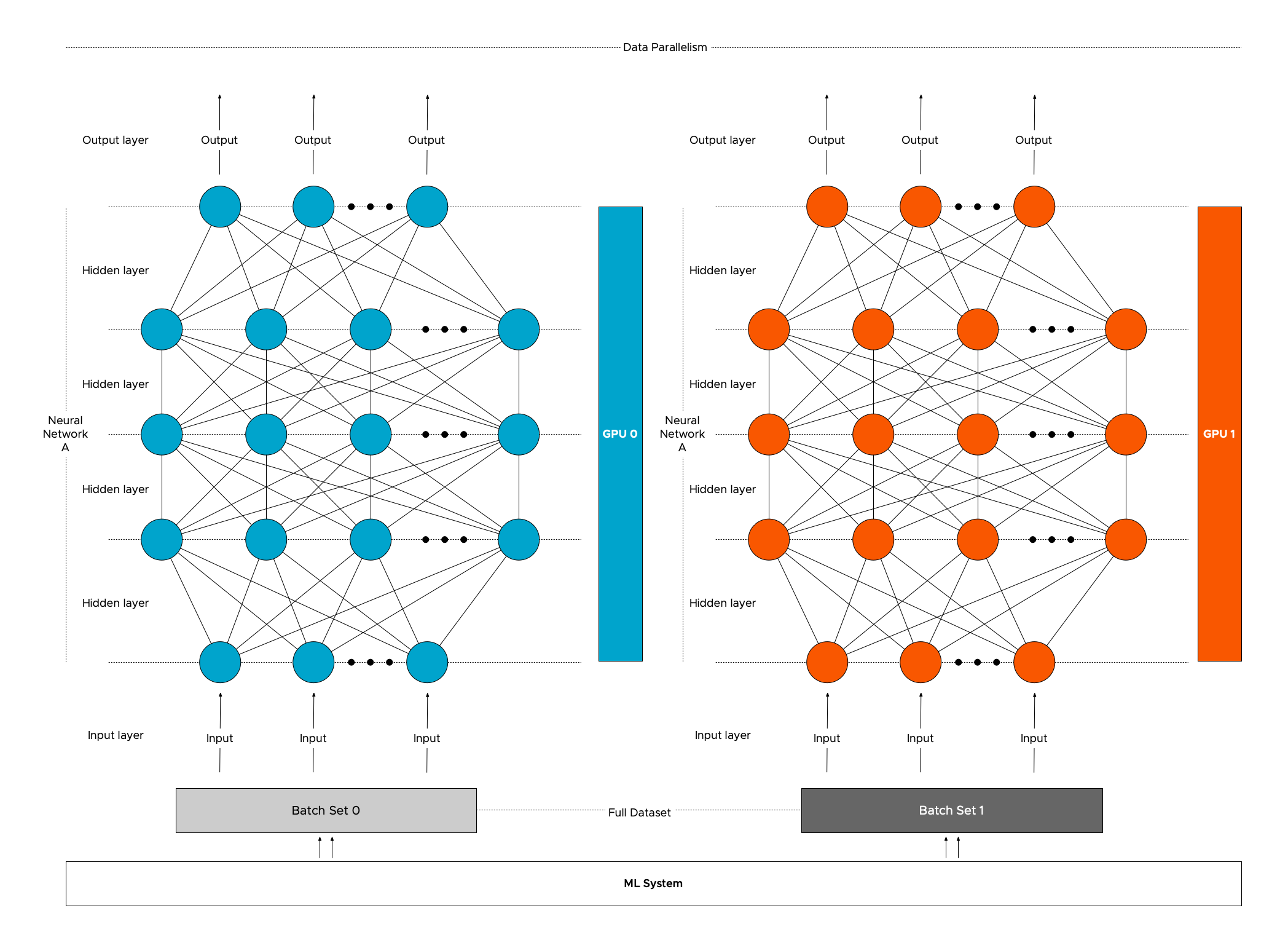

Multi-GPU training. Example using two GPUs, but scalable to all GPUs... | Download Scientific Diagram

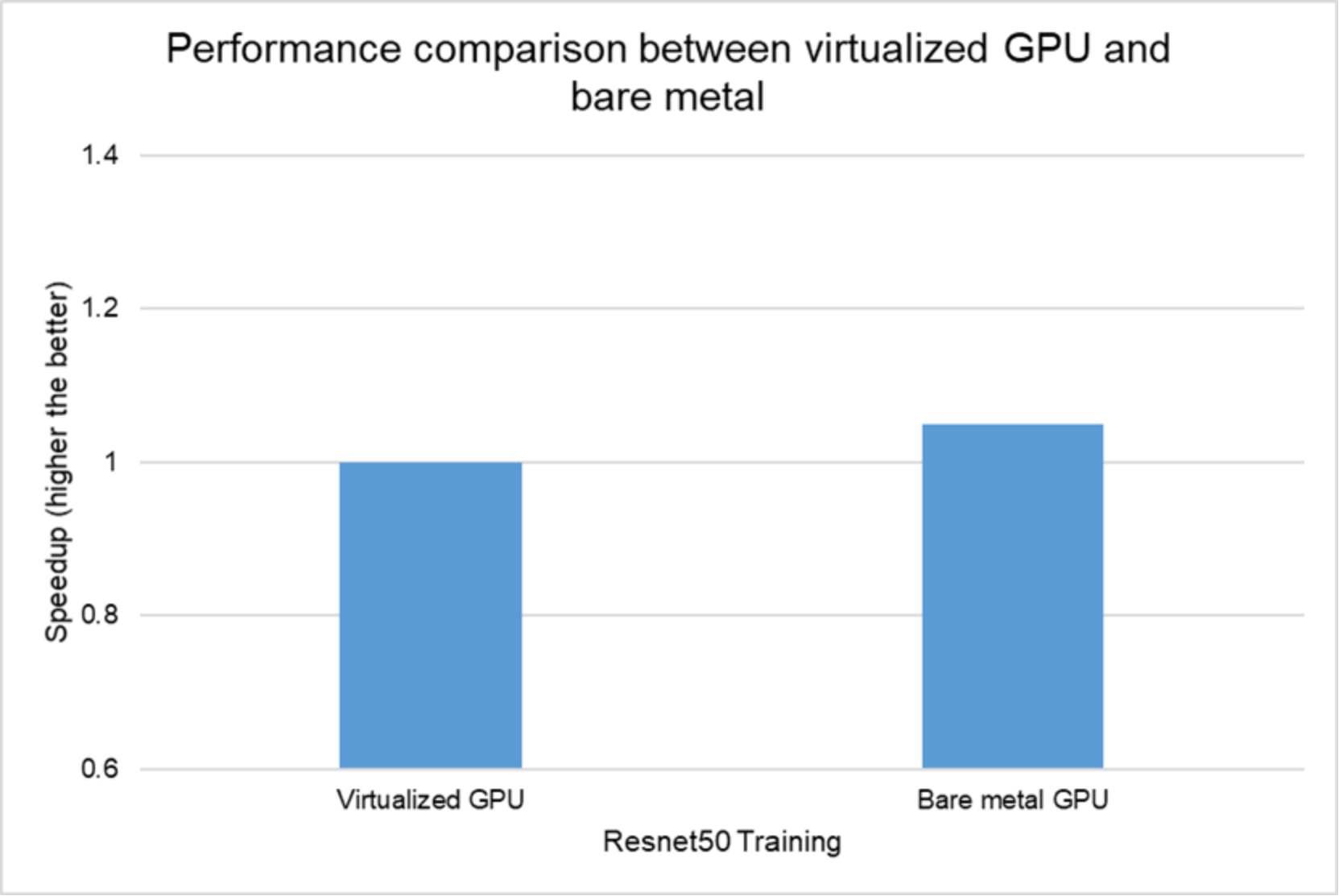

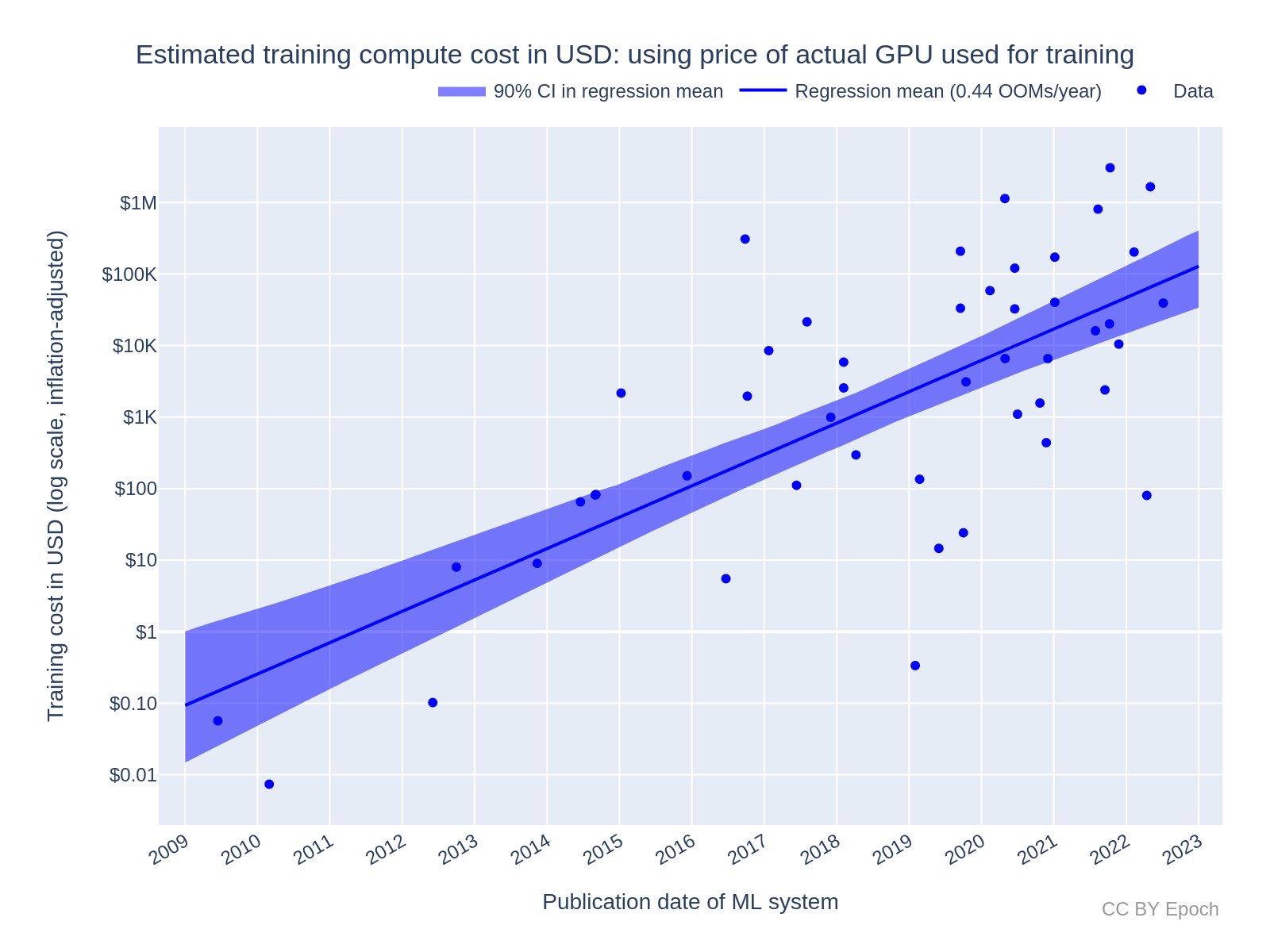

Data science experts have compared the time and monetary investment in training the model and have chosen the best option

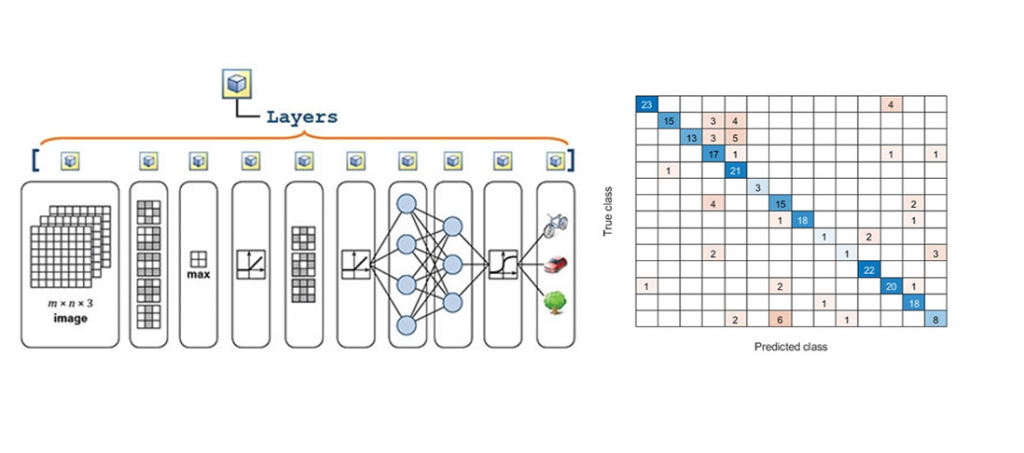

Accelerate computer vision training using GPU preprocessing with NVIDIA DALI on Amazon SageMaker | AWS Machine Learning Blog

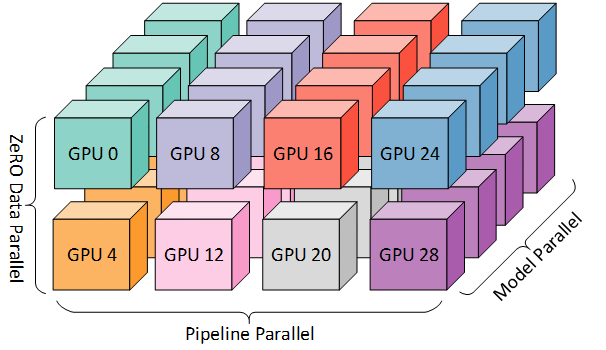

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

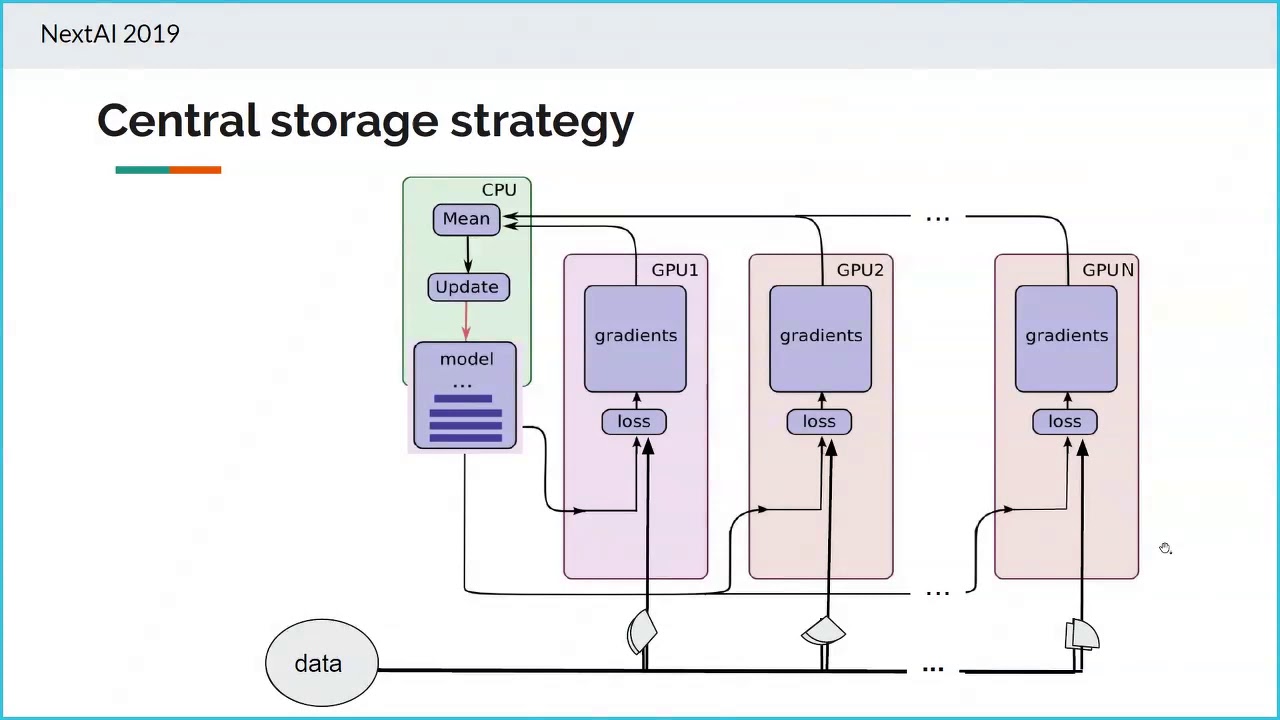

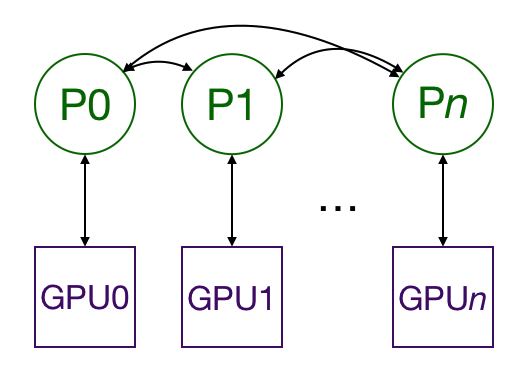

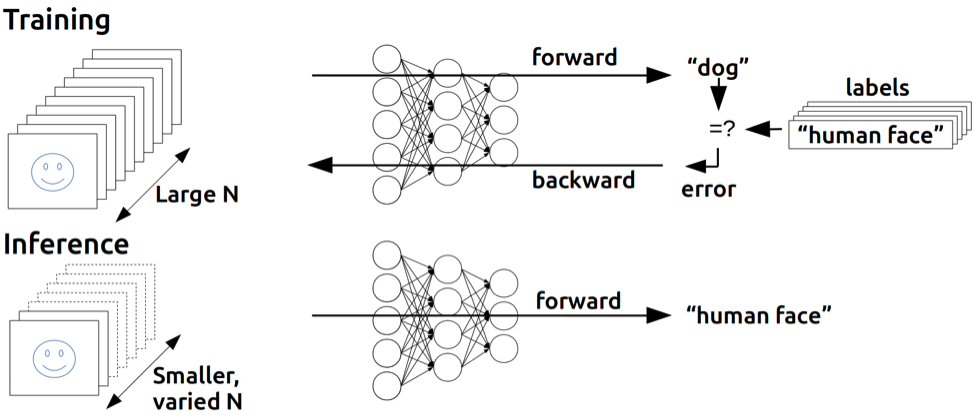

How distributed training works in Pytorch: distributed data-parallel and mixed-precision training | AI Summer